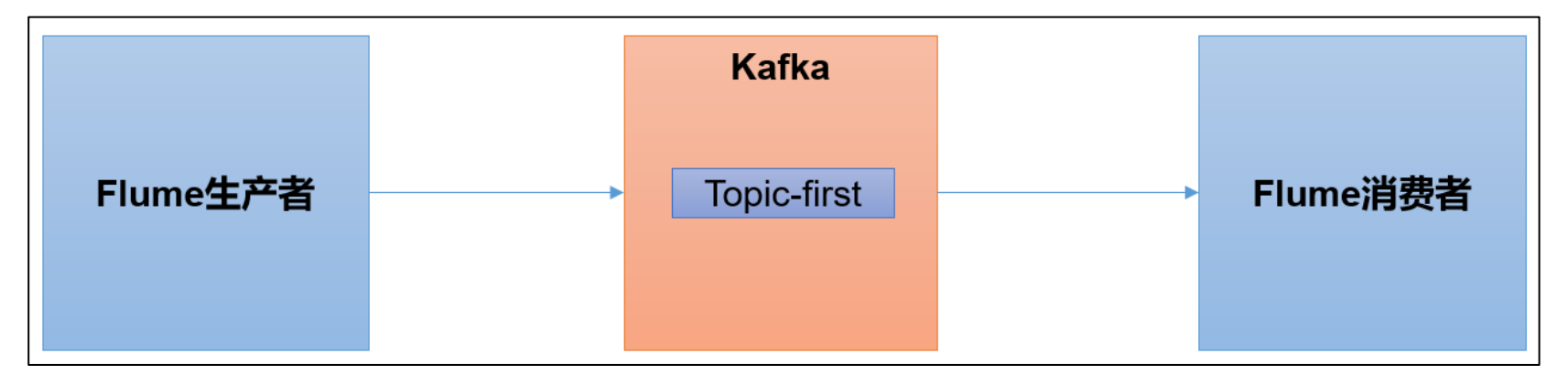

Flume 是一个在大数据开发中非常常用的组件。可以用于 Kafka 的生产者,也可以用于Flume 的消费者。

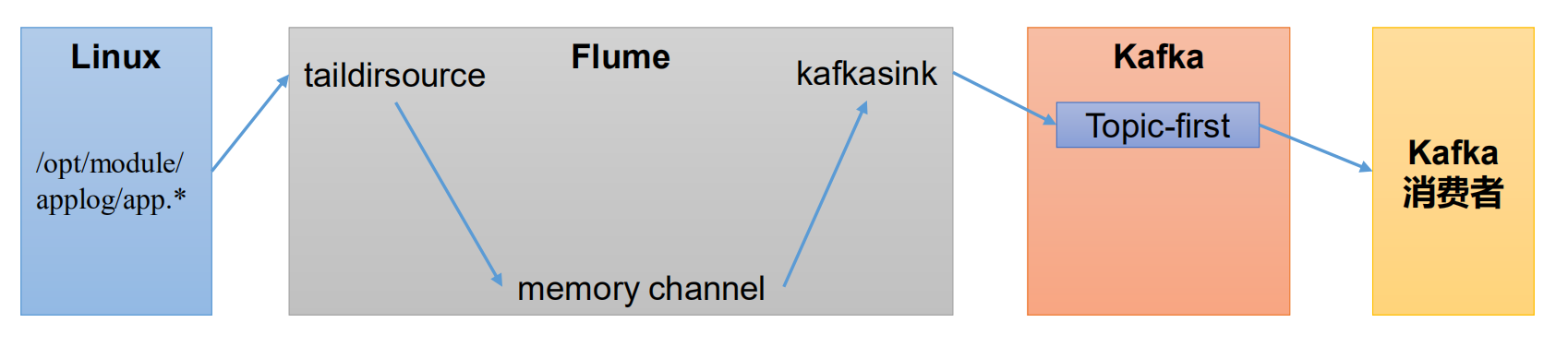

Flume 生产者

启动 kafka 集群

# 启动zookeeper集群

zk.sh start

# 启动kafka集群

kf.sh start

启动 kafka 消费者

kafka-console-consumer.sh -bootstrap-server hadoop100:9092 --topic first

配置 Flume

在 hadoop100 节点的 Flume 的 conf 目录下创建 file_to_kafka.conf

cd $FLUME_HOME/conf

vim file_to_kafka.conf

# 完整内容如下

# 1 组件定义

a1.sources = r1

a1.sinks = k1

a1.channels = c1

# 2 配置 source

a1.sources.r1.type = TAILDIR

a1.sources.r1.filegroups = f1

a1.sources.r1.filegroups.f1 = /opt/module/applog/app.*

a1.sources.r1.positionFile = /opt/module/flume-1.7.0/taildir_position.json

# 3 配置 channel

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# 4 配置 sink

a1.sinks.k1.type = org.apache.flume.sink.kafka.KafkaSink

a1.sinks.k1.kafka.bootstrap.servers = hadoop100:9092,hadoop101:9092,hadoop102:9092

a1.sinks.k1.kafka.topic = first

a1.sinks.k1.kafka.flumeBatchSize = 20

a1.sinks.k1.kafka.producer.acks = 1

a1.sinks.k1.kafka.producer.linger.ms = 1

# 5 拼接组件

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

启动 Flume

flume-ng agent -c conf/ -n a1 -f $FLUME_HOME/conf/file_to_kafka.conf

向/opt/module/applog/app.log 里追加数据,查看 kafka 消费者消费情况

mkdir /opt/module/applog

echo hello >> /opt/module/applog/app.log

观察 kafka 消费者,能够看到消费的 hello 数据

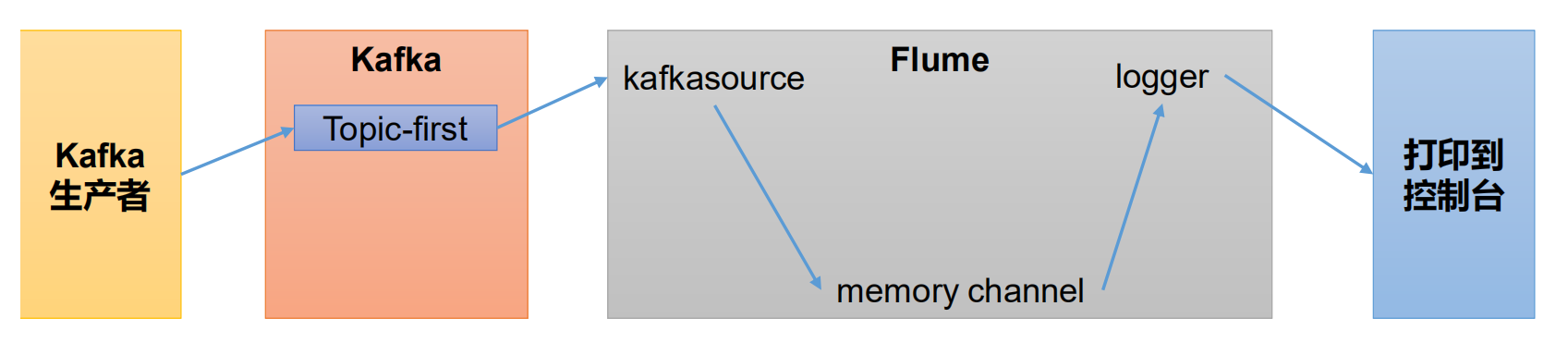

Flume 消费者

配置 Flume

在 hadoop100 节点的 Flume 的/opt/module/flume-1.7.0/conf 目录下创建 kafka_to_file.conf

cd $FLUME_HOME/conf

vim kafka_to_file.conf

# 完整内容如下

# 1 组件定义

a1.sources = r1

a1.sinks = k1

a1.channels = c1

# 2 配置 source

a1.sources.r1.type = org.apache.flume.source.kafka.KafkaSource

a1.sources.r1.batchSize = 50

a1.sources.r1.batchDurationMillis = 200

a1.sources.r1.kafka.bootstrap.servers = hadoop100:9092

a1.sources.r1.kafka.topics = first

a1.sources.r1.kafka.consumer.group.id = custom.g.id

# 3 配置 channel

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# 4 配置 sink

a1.sinks.k1.type = logger

# 5 拼接组件

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

启动 Flume

flume-ng agent -c conf/ -n a1 -f $FLUME_HOME/conf/kafka_to_file.conf -Dflume.root.logger=INFO,console

启动 kafka 生产者

kafka-console-producer.sh --bootstrap-server hadoop100:9092 --topic first

输入数据,例如:hello world,观察flume控制台输出的日志

评论区